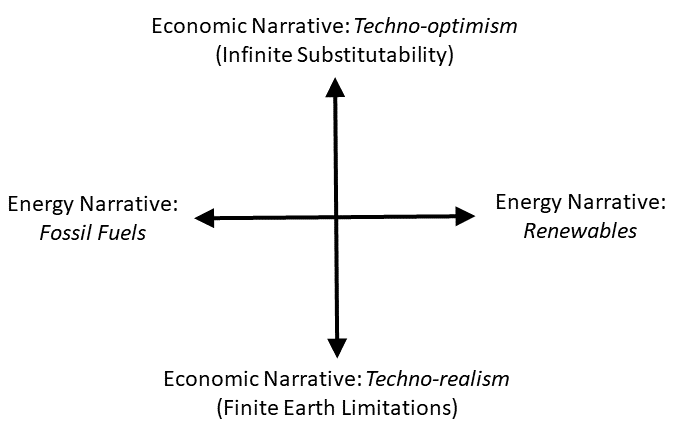

In my book The Economic Superorganism: Beyond the Competing Narratives on Energy, Growth, and Policy, I describe narratives along two axes (see Figure 1): energy and economics. Because people disagree as to the costs, capabilities, and benefits of different energy technologies and resources, proponents of different visions use narratives to convince stakeholders of the validity of their positions.

Figure 1. A diagram of narratives along two dimensions: energy—fossil versus renewable;

economics—technological optimism of infinitely substitutable technology versus technological

realism that the finite Earth imposes limits to growth.

The two energy narratives (fossil fuels vs. renewable energy) characterize the extreme views regarding the desired sources for our future energy system that best meet our future social and economic needs:

Energy Narrative: Fossil Fuels Are the Future

This narrative recognizes that fossil fuels enabled us to achieve what we have today. A proponent might say: “The physical fundamentals of fossil fuels, such as high energy-density and portability, ensure low cost and their continued dominance. Why not use them? Renewable energy technologies require subsidies to entice investment because they cannot achieve the historical or present levels of low cost and productivity of fossil fuels and related technologies. Therefore, we should promote increased fossil fuel use for the foreseeable future. Fossil fuels, and the technologies we have developed to burn them, enable us to shape and control the environment rather than the reverse situation before we invented fossil-fueled machines. Further, fossil fuels are the best hope to bring poor countries out of poverty while continuing

to increase prosperity within developed countries.”

Energy Narrative: Renewable Energy Is the Future

This narrative states we can use renewable energy technologies and resources to sufficiently substitute for the services currently provided by fossil fuels. A proponent might say: “Thank you fossil fuels, but we’ve modernized. We don’t need or want you anymore. Fossil fuel production and consumption create environmental harm both locally over the short-term (e.g., air and water contamination) and globally over the long-term (e.g., climate change) to such

a degree that their continued unmitigated use ensures environmental ruin that will lead to economic ruin. In addition, the concentration of fossil fuel resources means that countries and citizens have unequal ownership of them, creating geopolitical instability over extraction and distribution. Thankfully, renewable energy technologies are now cheap enough to transition from fossil fuels. Further, a renewable energy system is the best hope to bring poor countries out of poverty while continuing to increase prosperity within developed countries.”

Both energy narratives use economic narratives to justify their arguments, and these arguments shape energy policies that affect each one of us. Economic theory in turn informs us how to perform calculations that provide insight into the ramifications of choosing one energy pathway versus another. My book discusses how one’s economic viewpoint, or narrative, can lead one to ignore important similarities and differences between fossil and renewable energy systems. Here, I only state the economic narratives for consideration.

Economic Narrative: Technological Optimism

(There Is Infinite Substitution

of Technology to Achieve Growth and Social Outcomes)

This narrative posits unbounded technological change that creates substitutes for whatever we desire. It does not necessarily deny that the Earth is finite, but it does not believe that this fact affects economic or physical outcomes that impact the overall human condition. It is the view of most mainstream economists. A proponent might say: “Technological innovation has and will always address the pressing needs for society. In order to promote seeking of solutions, we need a signal. That signal is the price of a good, or a ‘bad’ (e.g., air pollution), and the signal is provided by setting up a market. Therefore we must establish and promote free markets, private ownership and profits via capitalism, and business competition. This is the way toward continued growth and prosperity. With regard to energy, as long the aforementioned criteria govern the economy, its price always decreases, so there is no need to worry. Markets best address socio-economic issues because they process information better than any human regulator or government agency.” Got a problem? Make a market for it.

Economic Narrative: Technological Realism

(The Finite Earth and Laws of

Physics Impose Biophysical Constraints on Growth that Affect Social Outcomes)

This narrative takes to heart that the Earth is finite. It is the position of many ecologists, physical scientists, and some economists. A proponent might say: “Humans need food to survive and our economy requires energy consumption and physical resources to function. These facts very much matter for economic reasons because the feedbacks from physical growth on a finite planet will eventually force changes in structural relations within our economy and society more broadly. These changes can have positive or negative outcomes for our perception of the human condition, but to create positive outcomes, we must perceive, accept, and adjust to the physical limits of a finite Earth and relate our economy to physical laws and processes. Markets can work, but they have problems. Theoretically they can include all important pieces of information, but practically, finite time and incomplete information prevents formation of pure price signals.” The narrative is summed up well by a statement attributed to economist Kenneth Boulding: “Anyone who believes that exponential growth can go on forever in a finite world is either a madman or an economist.”

Consider these 4 narratives along the 2 axes of Figure 1 anytime you read and article, policy, or book promoting or disparaging a particular energy policy or technology.